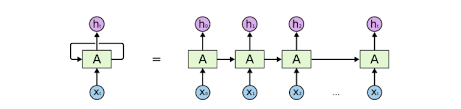

Recurrent Neural Networks (RNNs) are a powerful and widely used class of neural network architectures for modeling sequence data. The basic idea behind RNN models is that each new element in the sequence contributes some new information, which updates the current state of the model.

The Importance of Sequence Data

An immensely important and useful type of structure is the sequentiell structure. Thinking in terms of data sience, this fundamental structure appears in many datasets, across all domains.

- In computer vision, video is a sequence of visual content evolving over time

- in speech we have audii signals

- in genomics gene sequences

- in haealthcare we have longitudinal records

- in stock markets financial data

Use cases

NLP

A great application is National Lamguage Processing (NLP). RNNs have been demonstrated by many people on the internet who created amazing models that can represent a language model. These language models can take input such as a large set of shakespeares poems, and after training these models they can generate their own Shakespearean poems that are very hard to differentiate from originals!

PANDARUS: |

Machine Translations

Another amazing application of RNNs is machine translation. Two simultaneously trained RNNs are used. In the network the inout are pairs of sentences in different languages. You e. g. feed the network an english sentence paired with the French translation. With enough training data you can give the network any English sentence and it will translate into french. This model is called Sequence 2 Sequence model or Encoder Decoder model.